Ever felt like you’re navigating your training or a complex system with a best guess rather than a solid plan? You’ve likely encountered a situation where you had a hunch, a potential explanation for why your cycling performance plateaued, or why a particular marketing campaign didn’t quite hit the mark. This is where the power of hypothesis testing truly shines. At Explore the Cosmos, we believe in the transformative impact of data-driven analysis, and hypothesis testing is a cornerstone of that philosophy. It’s the scientific method applied to your questions, allowing us to move beyond intuition and towards validated insights. In this post, we’ll demystify hypothesis testing, explaining what it really means and how it helps us make smarter, more confident decisions, whether we’re analyzing cycling metrics or exploring complex systems.

What Exactly is Hypothesis Testing?

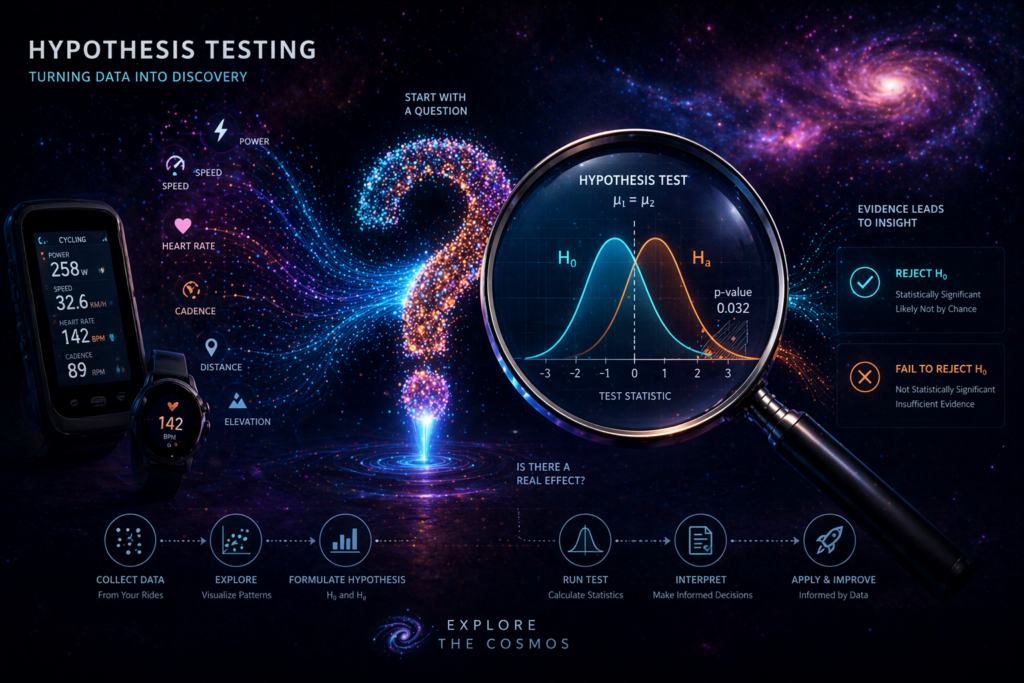

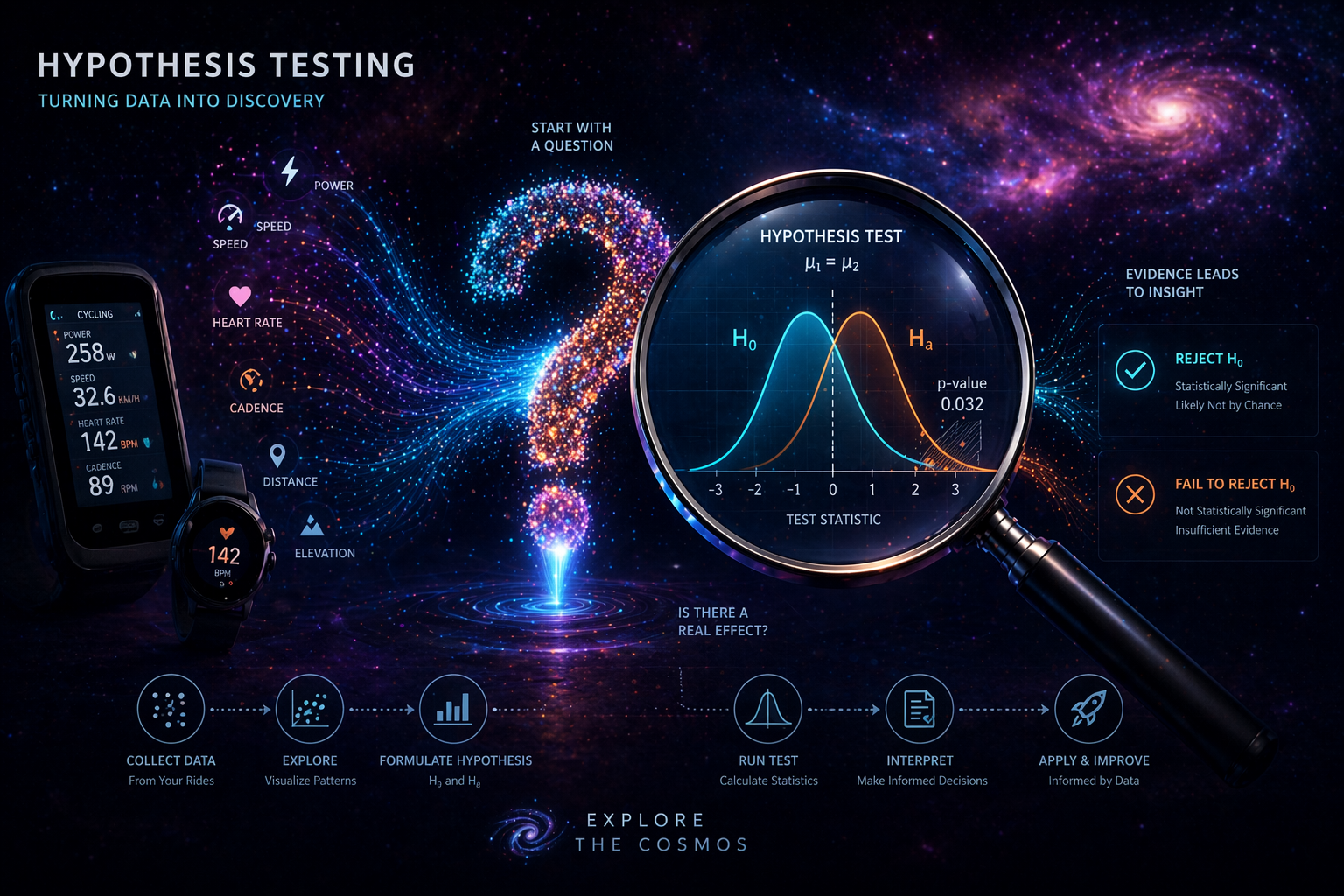

At its core, hypothesis testing is a statistical method used to make decisions or draw conclusions about a population based on sample data. It’s a systematic process designed to determine whether there is enough evidence in a sample of data to reject a specific statement or assumption about the population. Think of it as a formal way of asking, “Is this observation likely due to a real effect, or could it just be random chance?”

The process begins with formulating two competing statements: the null hypothesis (H₀) and the alternative hypothesis (H₁). The null hypothesis typically represents the status quo, a default assumption, or the idea that there is no significant effect or difference. For instance, if we’re testing a new training protocol, the null hypothesis might be that the new protocol has no effect on cycling speed. The alternative hypothesis, on the other hand, proposes that there is a significant effect or difference – in our example, that the new protocol does improve cycling speed.

We then collect data, analyze it, and use statistical tools to determine whether we have enough evidence to reject the null hypothesis in favor of the alternative. This isn’t about proving the alternative hypothesis is true; rather, it’s about demonstrating that the null hypothesis is unlikely to be true given the observed data. As highlighted in recent research, hypothesis testing remains a fundamental tool for inferential statistics, providing a structured framework to quantify uncertainty and draw conclusions from data.

Why Hypothesis Testing Matters: From Cycling to Complex Systems

The principles of hypothesis testing are universally applicable, from the granular details of athletic performance to the grandest explorations of space science. For cyclists using our Apple Health Cycling Analyzer, hypothesis testing can help validate the effectiveness of different training strategies. For example, you might hypothesize that increasing your interval training by 10% will lead to a 5% increase in your Functional Threshold Power (FTP). Hypothesis testing provides the framework to rigorously test this assumption using your own performance data.

Beyond cycling, our mission at Explore the Cosmos is to delve into complex systems. Whether it’s understanding human performance, analyzing biological processes, or even probing the vastness of space, data-driven insights are paramount. Hypothesis testing allows us to ask meaningful questions and seek evidence-based answers. For instance, in evaluating the impact of a new feature in a software system or assessing the effectiveness of a public policy intervention, hypothesis testing offers a robust methodology for validation. The trend towards making “small, fast experiments” tied to specific decisions is gaining traction in 2026, emphasizing hypothesis-driven testing for validating assumptions before scaling.

The Core Components of Hypothesis Testing

To conduct a hypothesis test effectively, several key elements are involved:

- Null Hypothesis (H₀): The statement of no effect or no difference.

- Alternative Hypothesis (H₁): The statement that contradicts the null hypothesis, suggesting an effect or difference exists.

- Significance Level (α): This is the threshold for deciding whether to reject the null hypothesis. Commonly set at 0.05, it represents the probability of rejecting the null hypothesis when it is actually true (a Type I error).

- Test Statistic: A value calculated from the sample data that measures how far the sample result deviates from the null hypothesis.

- P-value: The probability of observing a test statistic as extreme as, or more extreme than, the one calculated from the sample data, assuming the null hypothesis is true. A small p-value (typically less than α) suggests that the observed data is unlikely under the null hypothesis, leading to its rejection.

- Decision: Based on the p-value and the significance level, we either reject the null hypothesis or fail to reject it. It’s crucial to note that “failing to reject” does not mean the null hypothesis is proven true; it simply means there wasn’t enough evidence to reject it.

Moving Beyond the Basics: Advanced Hypothesis Testing in 2026

As data sets become larger and more complex, so do the methods required to analyze them. The landscape of hypothesis testing is continuously evolving. In 2026, we’re seeing a growing emphasis on advanced and alternative methods to overcome the limitations of classical approaches, particularly when assumptions like normality or homogeneity of variance are violated, or when dealing with high-dimensional data.

Techniques like Analysis of Variance (ANOVA), chi-square tests, and non-parametric methods such as the Mann-Whitney U test are becoming increasingly vital for analyzing intricate data structures with multiple variables. These advanced methods allow for more nuanced interpretations, accounting for greater variability and potential confounding factors. For example, ANOVA is crucial when comparing the means of three or more independent groups, a common scenario in performance analysis where you might compare different training blocks or recovery protocols.

Furthermore, the rise of machine learning and big data has spurred developments in hypothesis testing tailored for these environments. This includes methods for hypothesis testing in high-dimensional data and the integration of hypothesis testing within machine learning workflows for tasks like model validation. The concept of active hypothesis testing is also gaining traction, where computational budgets are managed to ensure valid statistical conclusions for a large number of hypotheses, especially in fields like genomics or large-scale A/B testing. This is particularly relevant as businesses increasingly aim for “learning velocity” by running efficient, hypothesis-driven tests.

Common Misconceptions About Hypothesis Testing

Despite its utility, hypothesis testing is often misunderstood. Here are a few common pitfalls to avoid:

- Confusing statistical significance with practical significance: A statistically significant result (p < 0.05) indicates that an observed effect is unlikely due to chance. However, it doesn’t necessarily mean the effect is large or meaningful in a real-world context. A tiny improvement might be statistically significant with a large sample size, but practically irrelevant for your cycling performance.

- Misinterpreting the p-value: A p-value is NOT the probability that the null hypothesis is true. It’s the probability of observing the data (or more extreme data) if the null hypothesis were true.

- Believing “non-significant” means “no effect”: Failing to reject the null hypothesis doesn’t prove it true. It simply means we lack sufficient evidence to reject it at the chosen significance level. There might be a real effect, but our study might have lacked the power (e.g., insufficient sample size) to detect it.

- Ignoring assumptions: Many statistical tests rely on specific assumptions about the data (e.g., normality, independence). Violating these assumptions can lead to incorrect conclusions. This is why understanding advanced and alternative methods is crucial.

Hypothesis Testing in Action: Practical Applications

The beauty of hypothesis testing lies in its practical application across diverse fields. At Explore the Cosmos, we see its value mirrored in our core offerings:

- Cycling Performance Analysis:

- Training Optimization: Testing the hypothesis that a specific training intensity or duration leads to improved VAM (Vertical Ascent per Minute) or Heart Rate Drift.

- Pacing Strategy: Hypothesizing that a different pacing strategy on climbs results in a faster overall time, and validating this with recorded data.

- Nutrition and Recovery: Testing whether changes in nutrition or recovery protocols correlate with measurable improvements in metrics like Efficiency Factor.

- Data Science & Machine Learning:

- Model Validation: Using hypothesis tests to determine if a machine learning model’s performance on unseen data is significantly better than a baseline or a simpler model.

- Feature Engineering: Testing if the addition of a new feature significantly improves a predictive model’s accuracy.

- General Decision Making:

- Marketing Campaigns: A/B testing different ad creatives or landing page designs to see which performs significantly better in terms of click-through rates or conversion rates.

- Product Development: Hypothesizing that a new feature will increase user engagement and testing this assumption with user data.

- Scientific Research: As seen in drug trials, hypothesis testing is fundamental for validating the efficacy of new treatments.

The trend in 2026 for Go-To-Market (GTM) strategies is shifting towards validation, using hypothesis-driven testing to prove what works before scaling. This mirrors our philosophy of data-driven discovery – testing assumptions to build confidence and drive informed action.

Embracing Data-Driven Discovery with Confidence

Hypothesis testing is more than just a statistical technique; it’s a mindset. It’s about approaching questions with a spirit of scientific inquiry, formulating clear, testable ideas, and letting the data guide our understanding. At Explore the Cosmos, we are committed to providing you with the tools and knowledge to embark on your own data-driven journeys, whether you’re optimizing your cycling performance or unraveling the mysteries of complex systems.

By understanding and applying hypothesis testing, you can move beyond guesswork, validate your assumptions, and make decisions with greater confidence. So, the next time you have a hunch about your training, a question about your data, or a complex problem to solve, remember the power of hypothesis testing. It’s your scientific compass for discovery.

Leave a Reply