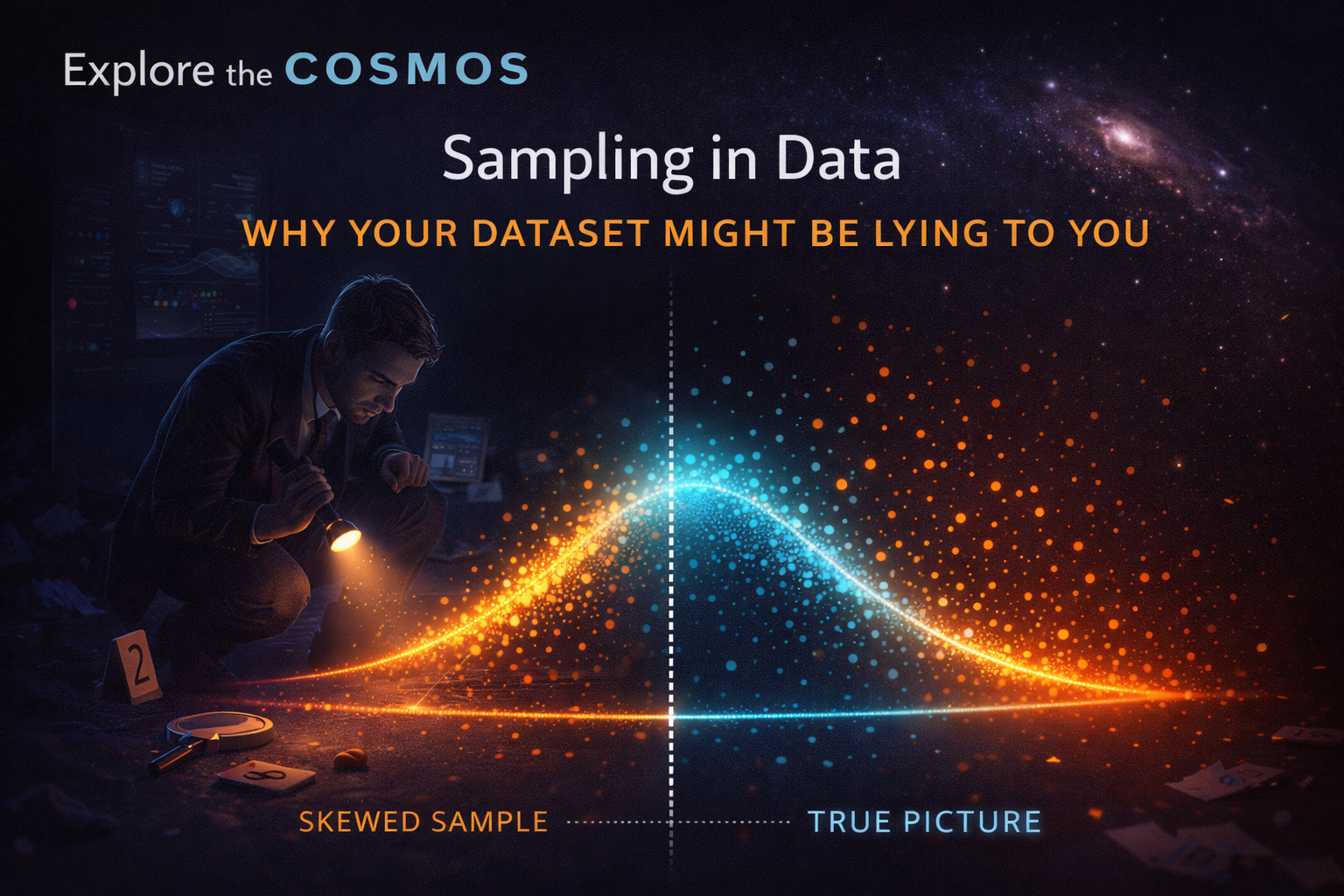

Imagine you’re a detective meticulously piecing together a crime scene. You can’t possibly examine every single grain of sand, every fiber of clothing, every speck of dust. Instead, you carefully select key pieces of evidence – a fingerprint here, a dropped button there – and from these, you infer what happened. This is the essence of sampling. In the world of data, just as in a crime scene investigation, we often work with a fraction of the whole picture. At Explore the Cosmos, we understand that while data is our compass for discovery, the way we collect and interpret it is paramount. This is particularly true when dealing with sampling – the process of selecting a subset of data from a larger population to represent it. But what happens when our sample is skewed, or our selection process flawed? We might end up with a dataset that not only lies but actively misleads us, sending us on a wild goose chase for insights that simply aren’t there.

The Illusion of Completeness: When Samples Misrepresent Reality

We often hear about the power of big data, but the reality is that working with an entire population is frequently impractical, if not impossible. Whether it’s analyzing the performance metrics of every cyclist who has ever used our Apple Health Cycling Analyzer, or understanding the intricate workings of a distant star system, we must often rely on samples. The goal is to glean insights from a manageable subset that accurately reflects the characteristics of the whole. However, the devil is in the details of the sampling process. A sample that doesn’t mirror the diversity and distribution of the original population can lead to conclusions that are wildly off the mark.

The Pitfalls of Non-Representative Sampling in 2026

As we navigate 2026, the sophistication of data collection methods continues to evolve, yet the fundamental challenges of sampling persist, and in some ways, are amplified by the sheer volume and velocity of data. Recent trends highlight how easily data can become skewed, even with seemingly robust collection strategies.

- Algorithmic Bias Amplification: In 2026, a significant concern in data sampling is how algorithms themselves can inadvertently perpetuate and even amplify existing biases within datasets. If an algorithm is trained on data that over-represents a certain demographic or condition, its future sampling or analysis will continue to favor that bias. For instance, if a health monitoring system, even one focused on privacy like ours, initially collects more data from a specific age group or activity level, subsequent analyses might incorrectly generalize findings to the broader population. This makes it crucial to constantly audit the sampling methods and the algorithms that use them for fairness and representativeness.

- The Rise of “Synthetic Data” and its Sampling Challenges: With increasing privacy concerns and the need for large datasets for AI training, synthetic data generation has boomed. While valuable, the sampling of real-world data used to train these generative models, or the methods used to sample from the synthetic data itself, can introduce new forms of distortion. If the initial “seed” data used to create synthetic datasets is biased, the synthetic data will inherit and potentially magnify these biases. Researchers in 2026 are grappling with how to ensure that samples drawn from synthetic datasets accurately reflect potential real-world outcomes without carrying over the original data’s limitations.

- Dynamic and Evolving Populations: Many populations are no longer static. Think of the rapid growth of new user segments for digital services or the changing environmental conditions influencing scientific observations. Sampling strategies that worked even a few years ago may become obsolete. In 2026, there’s a growing emphasis on adaptive sampling techniques that can dynamically adjust to changes in the underlying population. For example, in cycling performance, if a new type of training or equipment becomes popular, a static sampling approach might miss its impact, whereas an adaptive method would incorporate it into future analyses.

Why Your Dataset is Lying: Common Sampling Errors

A “lying” dataset is one whose apparent characteristics, derived from a sample, do not accurately reflect the true characteristics of the population from which it was drawn. This deception stems from various forms of sampling error. Understanding these errors is the first step towards building trust in the data we use for discovery, whether it’s optimizing cycling performance or exploring the cosmos.

Selection Bias: When the Sample Chooses Itself (or is Chosen Unfairly)

This is perhaps the most insidious form of sampling error. Selection bias occurs when the process of selecting the sample systematically excludes certain individuals or groups from the population, or includes others disproportionately.

- Convenience Sampling: Imagine asking the first 50 people you see at a park about their favorite sport. You’re likely to get a skewed result because you’re only sampling those who happen to be at that park at that specific time. This is convenience sampling – easy, but often unrepresentative.

- Self-Selection Bias: This happens when individuals choose whether or not to be included in the sample. For instance, online surveys often suffer from this. People who feel strongly about a topic are more likely to respond, creating a sample that doesn’t reflect the general population’s nuanced views.

- Undercoverage: If our data collection methods miss entire segments of the population, we have undercoverage. For example, if our Apple Health Cycling Analyzer data primarily comes from users with newer iPhone models, we might miss valuable insights from users with older devices who may have different usage patterns or data quality.

Measurement Error: Flaws in the Instruments or Methods

Even with a perfectly representative sample, errors in how data is measured or recorded can lead to a distorted view. This isn’t strictly a sampling error, but it contaminates the sample, making it lie about the true state of the world.

- Inaccurate Tools: A faulty heart rate monitor on a smartwatch, or a GPS device that loses signal in tunnels, introduces measurement error. This can lead to incorrect metrics like heart rate drift or inaccurate distance calculations.

- Response Bias: Respondents might not answer truthfully due to social desirability or misunderstanding questions. This can happen in surveys or even in how users interpret prompts for data input.

- Data Entry Errors: Simple typos or transcription mistakes during data input or processing can also introduce noise.

Survivorship Bias: The Ghosts of Data Past

This is a particularly common trap. Survivorship bias occurs when we focus only on the “survivors” – the subjects that made it through a particular process – and overlook those that didn’t. This can lead to an overly optimistic assessment.

- Cycling Performance Example: Imagine analyzing the peak performance data of cyclists who have completed a major event. If you only look at the finishers, you miss the crucial data from those who dropped out due to injury, poor pacing, or inadequate training. Your sample would suggest that everyone who starts finishes strong, which is rarely the case.

- Space Exploration Analogy: In space exploration, if we only study the successful missions, we might underestimate the challenges and failure points inherent in designing spacecraft or deep-space probes. The history of failed missions holds invaluable lessons that shouldn’t be ignored.

Building Trustworthy Data: Strategies for Better Sampling

At Explore the Cosmos, our commitment to data-driven discovery is intertwined with a deep respect for data integrity. While our tools, like the Apple Health Cycling Analyzer, are designed with privacy at their core, the insights derived from the data are only as good as the data itself. Here’s how we can all work towards more reliable data:

Randomization is Your Friend

The gold standard for avoiding selection bias is random sampling. Every member of the population has an equal chance of being included in the sample. Methods like simple random sampling, stratified sampling (where the population is divided into subgroups, and then random samples are drawn from each), and cluster sampling can all help ensure representativeness.

Understand Your Population

Before you even think about sampling, you need to have a clear understanding of the population you are trying to represent. What are its key characteristics? What is its diversity? This foundational knowledge guides the selection of appropriate sampling methods.

Be Skeptical of Your Own Data

Always question your data. Ask: Who is represented? Who is missing? How might the data have been collected? Could there be biases at play? This critical self-reflection is essential, especially when dealing with data that seems too good to be true, or conclusions that appear to be overly simplistic.

Combine Data Sources (When Appropriate)

Sometimes, a single data source might be biased. Cross-referencing with other, independent datasets can help validate findings and identify potential discrepancies introduced by sampling errors in one source.

Acknowledge Limitations

As we emphasize in our content, honesty about limitations is key. If your sample has known biases, acknowledge them. This doesn’t invalidate the insights but provides crucial context for their interpretation. For instance, when discussing cycling performance, we’d note if our analysis was primarily based on data from a specific geographic region or device type.

Conclusion: The Journey from Sample to Truth

Sampling is an indispensable tool in our quest for knowledge, a way to manage complexity and extract meaning from vast oceans of data. However, it’s a path fraught with potential pitfalls. A poorly chosen sample can lead us astray, causing our datasets to lie, not out of malice, but out of flawed construction. By understanding the common errors – selection bias, measurement error, and survivorship bias – and by employing rigorous sampling strategies, we can build more reliable insights. At Explore the Cosmos, we believe that true discovery comes from understanding not just the data, but also the nuances of how that data is collected and interpreted. So, the next time you look at a dataset, remember the detective at the crime scene: be thorough, be critical, and always question if your sample is telling you the whole story.

Leave a Reply