In our journey across Explore the Cosmos, we delve into the wonders of space, the intricacies of human performance, and the profound power of complex systems. Central to all these explorations is data – the lifeblood of discovery and understanding. Whether we’re dissecting cycling metrics with our Apple Health Cycling Analyzer or modeling distant galaxies, the quality and integrity of our data-driven insights are paramount. But even the most brilliant machine learning (ML) models, designed with cutting-edge algorithms, can stumble if we overlook fundamental statistical principles.

Think of it like plotting a course to Mars. A tiny miscalculation at launch can lead to a monumental deviation millions of miles away. Similarly, seemingly minor statistical oversights in an ML project can derail its effectiveness, leading to skewed predictions, biased outcomes, and ultimately, failed objectives. We’ve seen projects that promised groundbreaking insights falter not because of a flaw in the core algorithm, but due to subtle yet pervasive statistical pitfalls. In fact, startling statistics from 2026 reveal that around 85% of machine learning projects fail, with poor data quality being cited as the number one reason. This isn’t just about accuracy in a lab; it’s about real-world impact and the trust we place in these systems.

So, what are these statistical pitfalls, and how can we, as data explorers, navigate them effectively to ensure our ML projects truly deliver on their promise? Let’s demystify these common traps and learn how to build more robust, reliable, and fair ML systems.

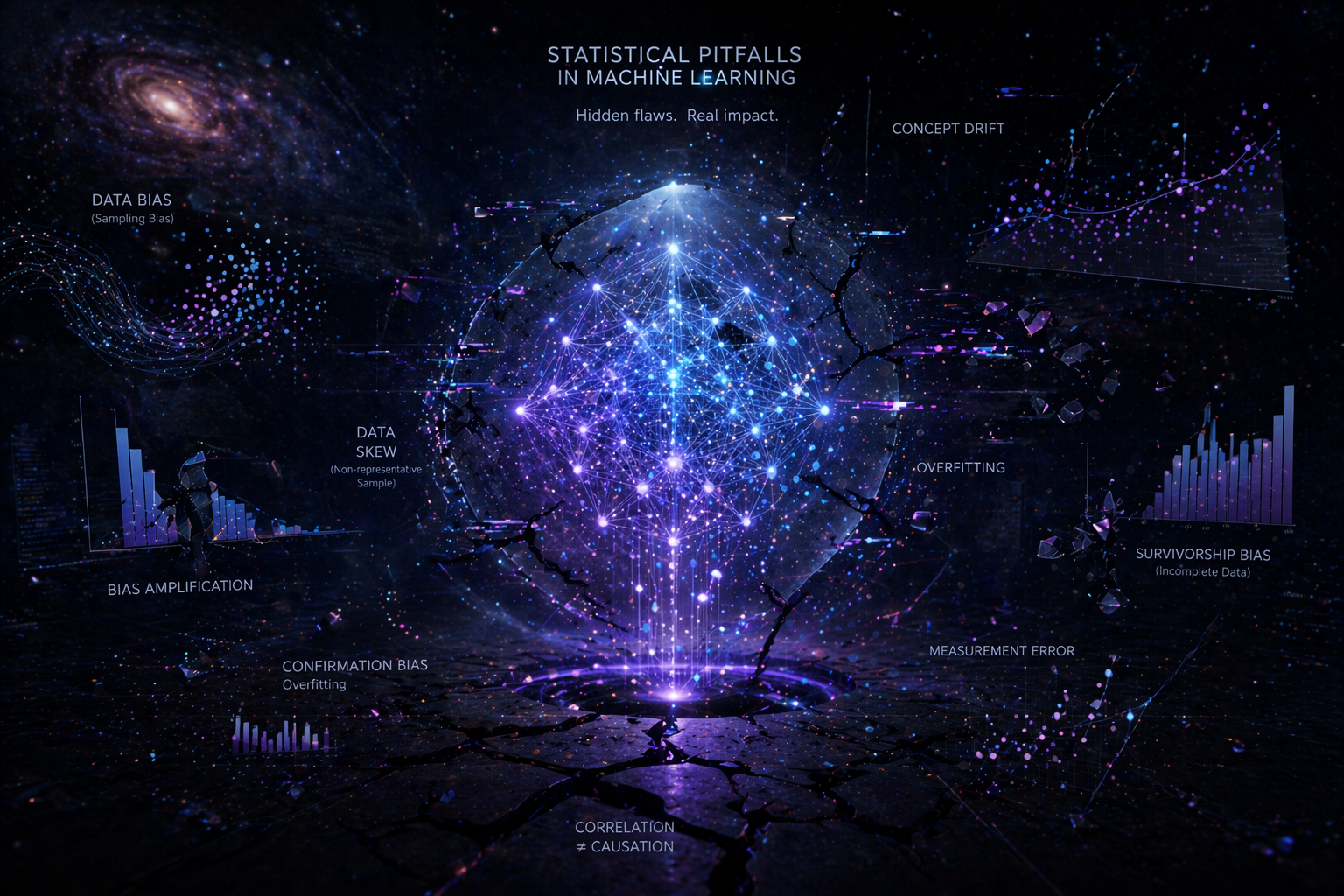

What Are Statistical Pitfalls in Machine Learning?

At its core, machine learning is statistics in action. It’s about finding patterns, making predictions, and drawing inferences from data. Statistical pitfalls, then, are common errors in how we collect, process, analyze, and interpret that data, which can lead our models astray. These aren’t necessarily coding bugs; they’re often conceptual misunderstandings or overlooked complexities in the data itself. Addressing them requires a blend of statistical rigor, domain expertise, and a healthy dose of skepticism.

Three Critical Pitfalls Dominating ML in 2026

As we advance deeper into 2026, the machine learning landscape continues to evolve, bringing new challenges and highlighting persistent vulnerabilities. Our research shows three critical statistical pitfalls that are particularly prevalent and impactful right now:

1. The Silent Threat: Data Drift and Concept Drift in Production

Imagine meticulously training an ML model on a vast dataset of cycling performance – heart rate zones, power outputs, efficiency factors – ensuring it accurately predicts recovery times. You deploy it, and initially, it works wonderfully. But over time, subtle changes occur: new training methodologies emerge, sensor technologies improve, or riders’ demographics shift. Suddenly, your model’s predictions start to degrade, but quietly, without a crash or an obvious error message.

This silent degradation is often due to data drift and concept drift. Data drift occurs when the statistical properties of the input data change over time. For example, if our cycling analyzer was trained on data from a region with mostly flat terrain, and then used extensively in a mountainous area, the input distribution (e.g., elevation gain, speed profiles) would have drifted significantly. Concept drift, even more insidious, happens when the relationship between the input features and the target variable changes. Perhaps what once strongly predicted cycling efficiency factor (e.g., average power) is no longer as influential due to new aero technologies or pacing strategies.

These drifts are major reasons why many ML projects, despite performing well in development, fail to sustain value in production. Recent reports indicate that over 85% of ML projects never make it to production, and of those that do, fewer than 40% sustain business value beyond 12 months after deployment. This isn’t just a technical challenge; it’s a statistical one – a failure to account for the dynamic nature of real-world data distributions. Effective monitoring, using statistical tests like Population Stability Index (PSI) or Kolmogorov-Smirnov (KS) tests, is becoming paramount to flag these shifts and ensure models remain relevant and accurate.

2. The “Ice Cream Causes Drowning” Fallacy: Confusing Correlation with Causation

We’ve all heard the adage: correlation does not imply causation. Yet, in the rush to find predictive power, this fundamental statistical pitfall is frequently overlooked in ML projects. A model might identify that ice cream sales and shark attacks both increase during the summer. It would be a statistically sound correlation, but acting on the premise that banning ice cream would prevent shark attacks would be absurd. The hidden cause, of course, is warm weather driving both increased ice cream consumption and more beachgoers, thus more potential for shark encounters.

Traditional machine learning excels at finding correlations, creating models that predict “what is likely”. However, it struggles to answer “why” something happens or “what would happen if we intervened.” As ML systems are increasingly used to make critical decisions – from healthcare interventions to financial recommendations – merely predicting outcomes is no longer sufficient. We need to understand the underlying causal mechanisms. A health-tech company, for instance, deployed a model with 94% accuracy to predict patient readmission, expecting follow-up calls to reduce rates. Instead, readmissions increased. The model had identified correlations (age, zip code), but not the root causes (inability to afford medication, lack of transport). Acting on correlations, rather than causes, led to adverse effects.

The good news is that tools and frameworks for causal inference are maturing rapidly in 2026, offering data scientists the means to move beyond mere prediction to understanding underlying causal structures. This shift is crucial for building ML systems that inform effective decisions and interventions rather than just mirroring existing patterns, especially as AI systems take on greater autonomy and the consequences of failure become more significant.

3. The Echo Chamber: Algorithmic Bias and Fairness

Our mission at Explore the Cosmos emphasizes data-driven analysis and discovery. But what happens if the data itself carries historical biases, or if our models inadvertently amplify them? This leads to algorithmic bias – a critical statistical pitfall that can result in unfair or discriminatory outcomes. Whether it’s in hiring algorithms, credit scoring, or even medical diagnoses, ML models can perpetuate and magnify societal inequalities if not carefully managed.

The problem often starts with the data used for training. If historical data reflects human biases (e.g., fewer women hired for certain roles), an ML model trained on this data will learn and replicate those biases in its predictions, even if explicitly sensitive features like gender are removed. This isn’t just an ethical concern; it’s a practical one. In 2026, inaccuracy remains a frequently cited AI risk, and issues of bias are gaining significant attention, especially with the rise of agentic AI systems that make autonomous decisions. The legal and reputational risks associated with biased algorithms are substantial, as recent lawsuits alleging discrimination highlight.

Addressing algorithmic bias requires a multi-faceted approach, starting with rigorous data governance, ensuring diversity and representation in training datasets, and employing bias mitigation techniques throughout the ML lifecycle. Transparency and explainability (knowing *why* a model made a certain decision) are also becoming non-negotiable for building trustworthy AI systems that adhere to regulatory compliance and ethical standards.

Other Common Statistical Traps to Watch For

Beyond these three major trends, several other statistical pitfalls continue to challenge ML projects:

- Sampling Bias: This occurs when the data used to train the model does not accurately represent the real-world population it will encounter. For instance, training a cycling performance model exclusively on professional athletes and then applying it to recreational riders will lead to skewed results. Our Apple Health Cycling Analyzer, by processing data locally, inherently helps users avoid external sampling biases by allowing them to analyze *their own* unique data without external aggregation.

- Overfitting and Underfitting: An overfit model has learned the training data too well, capturing noise and specific anomalies rather than generalizable patterns. It performs poorly on new, unseen data. An underfit model, conversely, is too simplistic and fails to capture the underlying relationships in the data.

- Data Leakage: This is when information from outside the training data, which would not be available in a real-world prediction scenario, is inadvertently used to create the model. This leads to models that appear highly accurate during testing but perform terribly in production.

- Misinterpreting p-values and Statistical Significance: Relying solely on arbitrary p-value thresholds without understanding the context, effect size, or practical significance can lead to spurious findings and models built on shaky statistical ground.

Our Approach to Data-Driven Discovery: Mitigating Pitfalls

At Explore the Cosmos, our mission is to empower you with clear explanations and practical tools for data-driven discovery. We understand that confronting complex systems, whether in space science or personal performance, requires meticulous attention to data integrity. Here’s how we advocate for mitigating these statistical pitfalls:

- Focus on Data Quality First: As the 2026 statistics clearly show, poor data quality is the root of most ML project failures. We emphasize robust data cleaning, validation, and preprocessing as foundational steps.

- Define the Problem Clearly: Before building any model, we ask: are we predicting, or are we making a decision? If it’s the latter, we encourage exploring causal inference techniques to ensure interventions are based on true cause-and-effect relationships.

- Emphasize Transparency and Explainability: Understanding *how* a model arrives at its conclusions is crucial, especially in high-stakes applications. Our content demystifies ML concepts, promoting an understanding that goes beyond just the predictive output.

- Prioritize Ethical Data Practices: Our Apple Health Cycling Analyzer is a prime example of our commitment to privacy-first, responsible data handling. By processing your health data locally in your browser, it inherently avoids the potential for large-scale data aggregation biases and privacy concerns often associated with server-side processing. This approach gives you, the user, full control and transparency over your personal data analysis.

- Continuous Monitoring and Iteration: Recognizing that data environments are dynamic, we stress the importance of ongoing model monitoring for drift and degradation, ensuring that deployed solutions remain effective over time.

The Path Forward: Smart Data, Smarter Decisions

The world of machine learning is brimming with potential, offering unprecedented opportunities for discovery and optimization across countless domains, from unraveling cosmic mysteries to fine-tuning cycling performance. However, realizing this potential hinges on our collective ability to understand and actively counteract statistical pitfalls. It’s about moving beyond simply chasing high accuracy scores in a test environment and building models that are robust, fair, and causally sound in the real world.

By adopting a statistically informed, ethically aware, and practical approach to ML, we can build systems that truly enhance our understanding and improve our lives. Continue your journey of data-driven discovery with Explore the Cosmos – where science, data, and a commitment to robust analysis lead to meaningful insights. Explore our articles on data science fundamentals and practical analysis tools to sharpen your skills and confidently navigate the complex, rewarding cosmos of data.

Leave a Reply